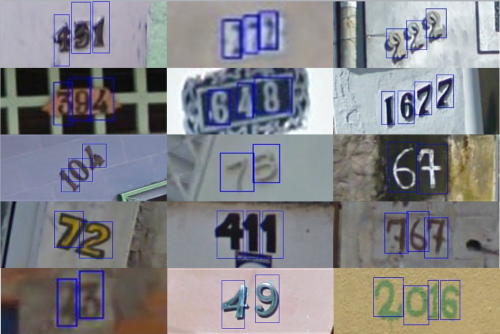

The Street View House Numbers (SVHN) Dataset from the Deep Learning Lab at the Stanford University Computing Science Department.

Walk around your neighborhood and look at the house numbers. Since we started making them at Nutmeg Designs, we can’t help but look at house numbers wherever we go. They are fascinating in their array of adaptation to awkward spaces, and often illegible or hidden away better than the spare key hidden under the doormat.

Even more fascinating was discovering this house number recognition project from the Deep Learning Lab within Stanford University’s Computing Science Department. The researchers created The Street View House Numbers (SVHN) Dataset from 600,000+ Google Street View images, with the idea of helping machines learn how to decipher numbers in the real world, and improve mapping.

According to Netzer et. al, in their paper Reading Digits in Natural Images with Unsupervised Feature Learning, optical character recognition is hard for computers outside of printed documents. This must explain why many CAPTCHA tests of whether you are a human or a computer involve reading house number photos. Humans are much better at recognizing something is a house number and what digits. The researchers looked for parameters, like digits being aligned without overlapping each other, but pointed out that that is not always the case.

Our goal is to make Nutmeg Designs’ house numbers even easier for humans to recognize!

Here’s one of our latest:

Green House Number 280 by Nutmeg Designs. This one went to San Rafael, CA.

Yuval Netzer, Tao Wang, Adam Coates, Alessandro Bissacco, Bo Wu, Andrew Y. Ng Reading Digits in Natural Images with Unsupervised Feature Learning NIPS Workshop on Deep Learning and Unsupervised Feature Learning 2011